Gen AI News Talk

Home Gen AI News Talk

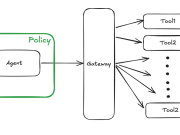

Secure AI agents with Policy in Amazon Bedrock AgentCore

Deploying AI agents safely in regulated industries is challenging. Without proper boundaries, agents that access sensitive data or execute transactions can pose significant security...

Improve operational visibility for inference workloads on Amazon Bedrock with new CloudWatch metrics for...

As organizations scale their generative AI workloads on Amazon Bedrock, operational visibility into inference performance and resource consumption becomes critical. Teams running latency-sensitive applications...

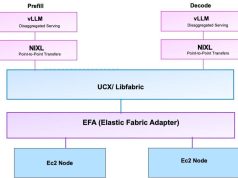

Fine-tuning NVIDIA Nemotron Speech ASR on Amazon EC2 for domain adaptation

This post is a collaboration between AWS, NVIDIA and Heidi.

Automatic speech recognition (ASR), often called speech-to-text (STT) is becoming increasingly critical across industries like...

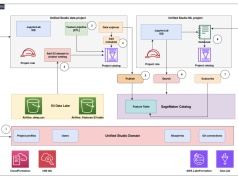

Multimodal embeddings at scale: AI data lake for media and entertainment workloads

This post shows you how to build a scalable multimodal video search system that enables natural language search across large video datasets using Amazon...

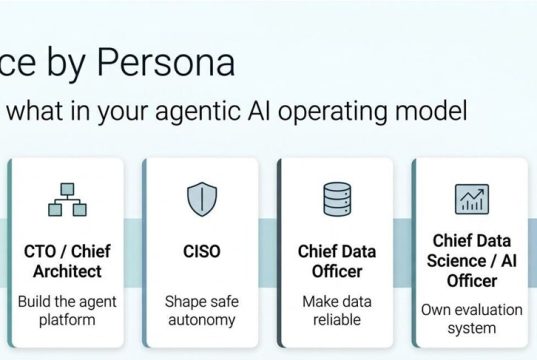

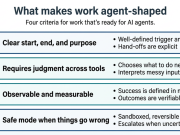

Operationalizing Agentic AI Part 1: A Stakeholder’s Guide

Agentic AI isn’t a feature you turn on. It’s a shift in how work is defined, who does it, and how decisions get made.

Most...

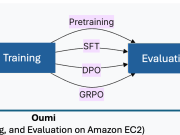

Accelerate custom LLM deployment: Fine-tune with Oumi and deploy to Amazon Bedrock

This post is cowritten by David Stewart and Matthew Persons from Oumi.

Fine-tuning open source large language models (LLMs) often stalls between experimentation and production....

Access Anthropic Claude models in India on Amazon Bedrock with Global cross-Region inference

The adoption and implementation of generative AI inference has increased with organizations building more operational workloads that use AI capabilities in production at scale....

Run NVIDIA Nemotron 3 Nano as a fully managed serverless model on Amazon Bedrock

This post is cowritten with Abdullahi Olaoye, Curtice Lockhart, Nirmal Kumar Juluru from NVIDIA.

We are excited to announce that NVIDIA’s Nemotron 3 Nano is...

Building custom model provider for Strands Agents with LLMs hosted on SageMaker AI endpoints

Organizations increasingly deploy custom large language models (LLMs) on Amazon SageMaker AI real-time endpoints using their preferred serving frameworks—such as SGLang, vLLM, or TorchServe—to...

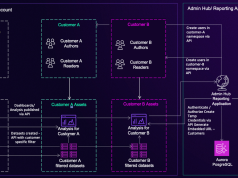

Drive organizational growth with Amazon Lex multi-developer CI/CD pipeline

As your conversational AI initiatives evolve, developing Amazon Lex assistants becomes increasingly complex. Multiple developers working on the same shared Lex instance leads to...