Gen AI News Talk

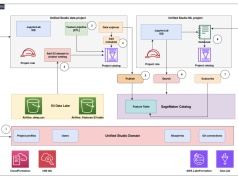

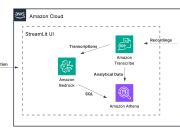

How Ricoh built a scalable intelligent document processing solution on AWS

This post is cowritten by Jeremy Jacobson and Rado Fulek from Ricoh.

This post demonstrates how enterprises can overcome document processing scaling limits by combining...

Unlock powerful call center analytics with Amazon Nova foundation models

Call center analytics play a crucial role in improving customer experience and operational efficiency. With foundation models (FMs), you can improve the quality and...

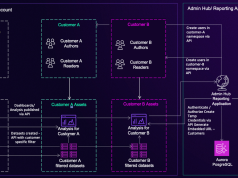

Embed Amazon Quick Suite chat agents in enterprise applications

Organizations can face two critical challenges with conversational AI. First, users need answers where they work—in their CRM, support console, or analytics portal—not in...

How Tines enhances security analysis with Amazon Quick Suite

Organizations face challenges in quickly detecting and responding to user account security events, such as repeated login attempts from unusual locations. Although security data...

How Lendi revamped the refinance journey for its customers using agentic AI in 16...

This post was co-written with Davesh Maheshwari from Lendi Group and Samuel Casey from Mantel Group.

Most Australians don’t know whether their home loan is...

Building a scalable virtual try-on solution using Amazon Nova on AWS: part 1

In this first post in a two-part series, we examine how retailers can implement a virtual try-on to improve customer experience. In part 2,...

Building specialized AI without sacrificing intelligence: Nova Forge data mixing in action

Large language models (LLMs) perform well on general tasks but struggle with specialized work that requires understanding proprietary data, internal processes, and industry-specific terminology....

Build safe generative AI applications like a Pro: Best Practices with Amazon Bedrock Guardrails

Are you struggling to balance generative AI safety with accuracy, performance, and costs? Many organizations face this challenge when deploying generative AI applications to...

Build a serverless conversational AI agent using Claude with LangGraph and managed MLflow on...

Customer service teams face a persistent challenge. Existing chat-based assistants frustrate users with rigid responses, while direct large language model (LLM) implementations lack the...

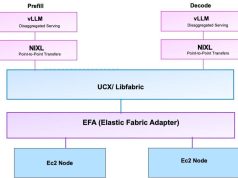

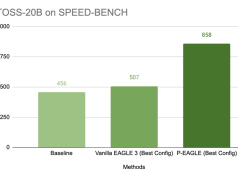

Large model inference container – latest capabilities and performance enhancements

Modern large language model (LLM) deployments face an escalating cost and performance challenge driven by token count growth. Token count, which is directly related...