AI is the defining technology of our time, quickly becoming core business infrastructure. It’s fueled by a diverse ecosystem of models: large and small, open and proprietary, generalist and specialist.

This variety is essential for a future where every application will be powered by AI, every country will build it and every company will use it. And it’s not a debate between open versus closed innovation.

As NVIDIA founder and CEO Jensen Huang told attendees at a special session on open frontier models at NVIDIA GTC, “Proprietary versus open is not a thing. It’s proprietary and open.”

That’s why the future of AI innovation isn’t about a single massive model. Every industry — healthcare, finance, manufacturing — tackles its own unique challenges. They all need AI that can reason about their data and workflows in various ways. And that requires systems of models, tuned and specialized for different modalities, domains and organizations, working together to solve a specific business problem.

NVIDIA is a major contributor to open source AI: it’s now the largest organization on Hugging Face, with nearly 4,000 team members. And at GTC, the company announced the NVIDIA Nemotron Coalition, a first-of-its-kind global collaboration of model builders and AI labs working to advance open, frontier-level foundation models through shared expertise, data and compute.

The first project stemming from the coalition will be a base model codeveloped by Mistral AI and NVIDIA, with coalition members contributing data, evaluations and domain expertise to support the model’s post-training and continued development. It’ll be shared with the open ecosystem and underpin the next generation of NVIDIA Nemotron models, which have been downloaded more than 45 million times from Hugging Face.

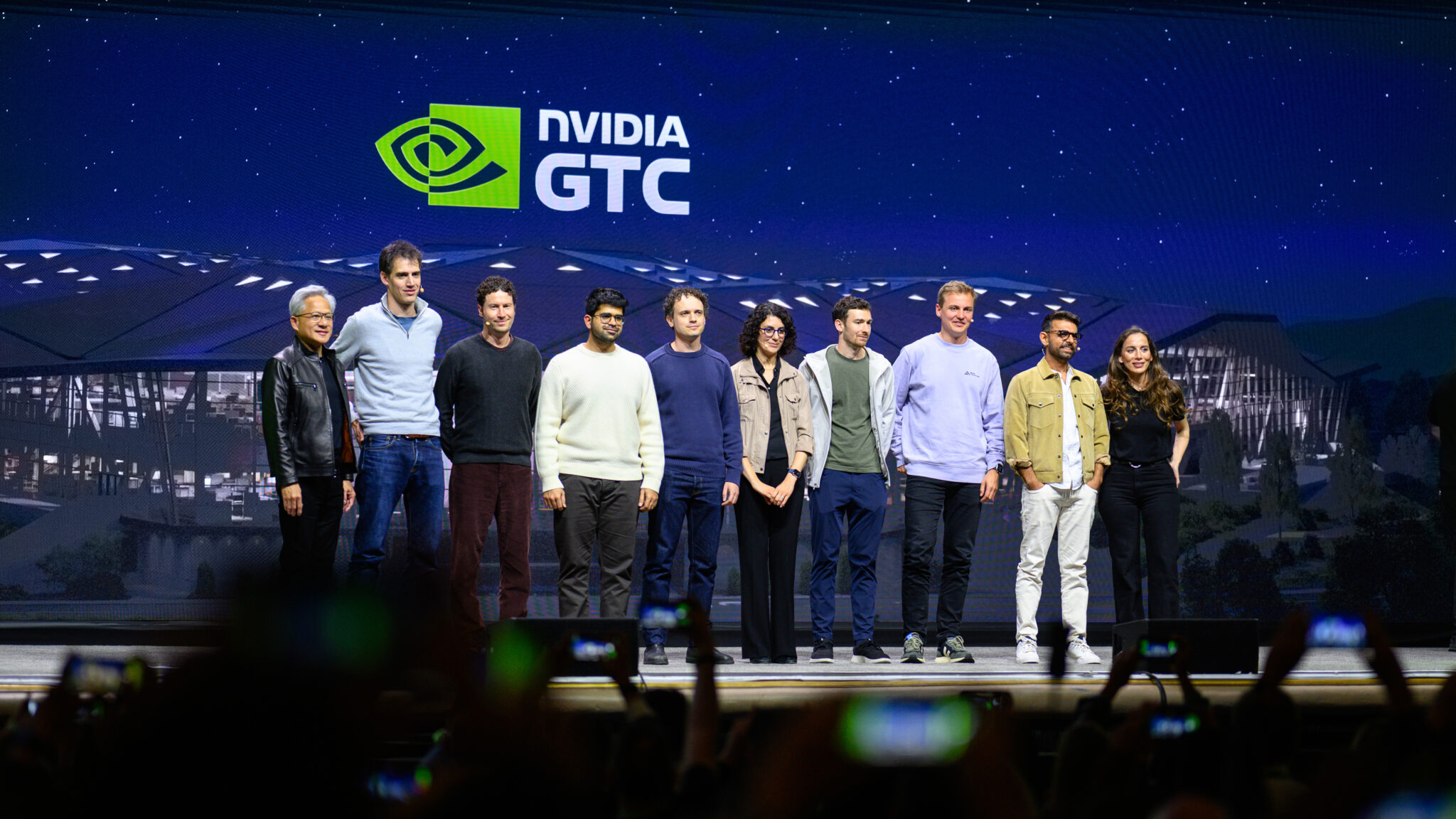

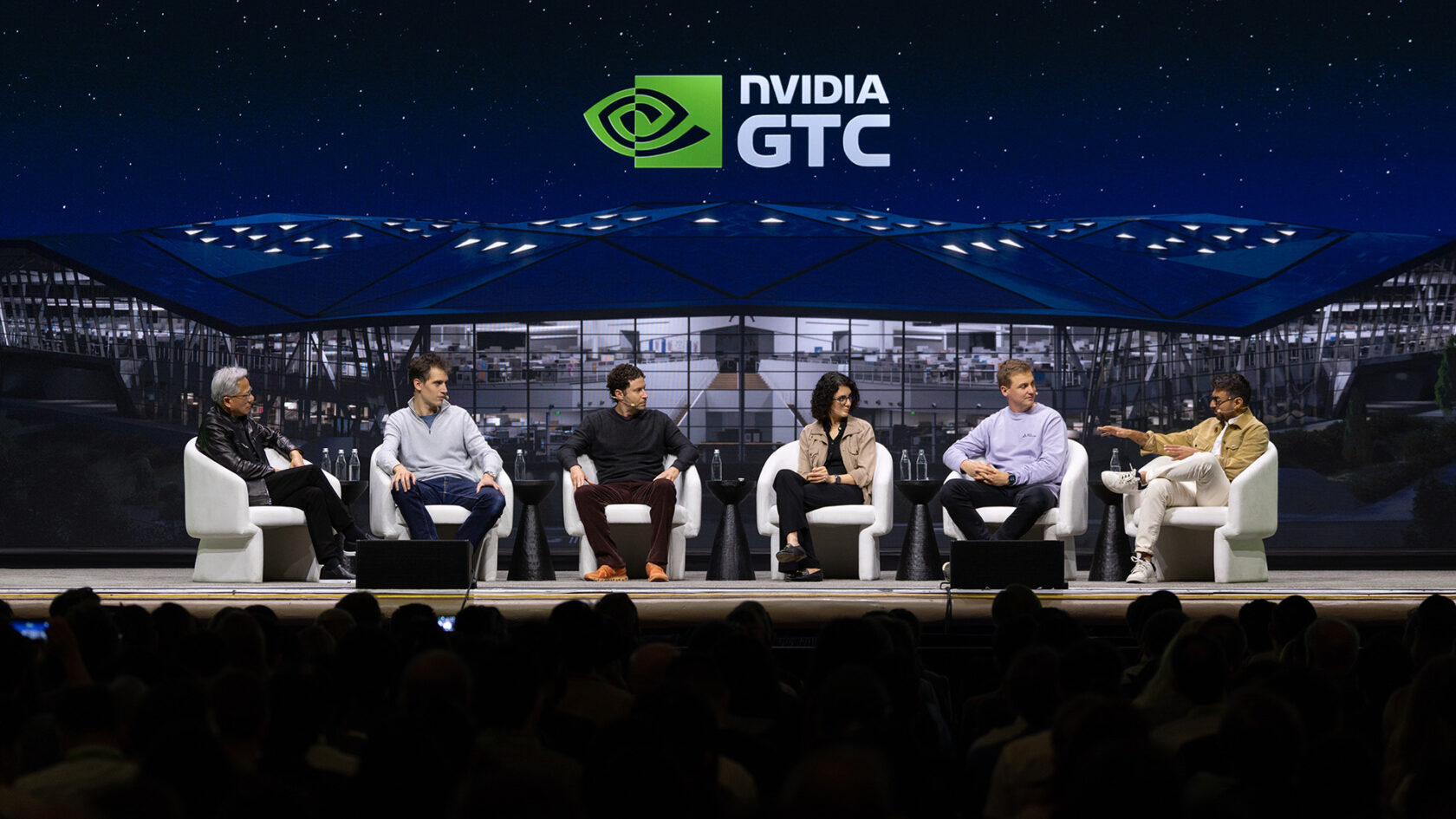

Several Nemotron Coalition members joined other leaders building and consuming open models for a back-to-back panel session at GTC.

The first panel featured LangChain cofounder and CEO Harrison Chase, Thinking Machines Lab founder and CEO Mira Murati, Perplexity CEO and cofounder Aravind Srinivas, Cursor CEO and cofounder Michael Truell, and Reflection AI cofounder and CEO Misha Laskin. The second included Mistral cofounder and CEO Arthur Mensch, OpenEvidence CEO Daniel Nadler, and Black Forest Labs cofounder and CEO Robin Rombach, alongside Hanna Hajishirzi, senior director of natural language processing at Ai2, and Anjney Midha, founder of AMP PBC.

Five key points stood out from the conversation:

1. AI agents are becoming highly capable coworkers.

“We’re soon going to see agents really be coworkers that can take on tasks that take many hours or many days, and do incredibly complex workloads,” said Cursor’s Truell.

2. AI is not a single model — it’s an orchestrated system.

“What you want is a multimodal, multi-model and multi-cloud orchestra,” said Perplexity’s Srinivas. “All you’ve got to do is delegate your task. You don’t have to worry about which model is good at what — it’s for the orchestration system to figure it out.”

3. Openness fuels innovation across the model ecosystem.

“Models are fundamental knowledge infrastructure, and fundamental knowledge infrastructure yearns for openness,” said Reflection AI’s Laskin. “There’s a flourishing ecosystem of powerful, closed models but equally capable open models that are going to be coming over the next couple years.”

This combination of open and proprietary models drives advancements at frontier AI companies as well as in academia.

“There’s a lot of study to be done, and it cannot be done completely in the large labs,” said Thinking Machines Lab’s Murati. “This is where openness can be very helpful…it advances the science of AI, the science of intelligence.”

4. Open systems are trustworthy and accessible.

“At the end of the day, you’re delegating trust…and it’s much easier to trust an open system,” said AMP PBC’s Midha.

With a trusted system, developers can deploy long-running AI agents that can tackle virtually any task.

“The models and the systems orchestrating the models are going to get much more capable,” said LangChain’s Chase. “And so you’ll be able to have personal productivity agents that can take on more complex tasks that run for longer.”

Open ecosystems also foster collaboration, helping democratize access to AI.

“We believe that open-wide models should be the basis for building all the AI software in the world,” said Mistral’s Mensch. “By having an open ecosystem of people that have aligned incentives to create assets that are going to be great for humanity, we can accelerate progress and make sure that everybody gets access in a fair way across the world to artificial intelligence.”

5. Society needs generalist and specialist AI to provide value.

“You have to sort of shape AI the way you shape society,” said OpenEvidence’s Nadler, describing how hospitals are organized into generalists working alongside world-class specialists. “I think the shape of AI is going to reflect that.”

Specialized AI is on the rise because it lets organizations combine open foundations with their own proprietary data. That unique data is where they unlock real, differentiated value across business and academia.

“These days you might argue that progress in AI is getting limited into a few closed labs, but it’s actually very important to the vast majority of academia and researchers, or nonprofit and other places who want to also be part of this progress,” said Ai2’s Hajishirzi. “And we’ve seen that all this progress already has happened by everything being open.”

“It’s actually one of the most exciting times to work on either the frontier models, the big models or more specialized open models that then get deployed on device,” said Black Forest Labs’ Rombach. “There’s so many different frontiers, and all of them should have some open component.”

Watch the GTC session highlights on YouTube and start building with NVIDIA Nemotron open models.